REALISM VS ACCURACY FOR AUDIOPHILES

PART 1: ALL THE WORLD’S A (SOUND)STAGE

INTRODUCTION TO THIS SERIES

Accuracy: “The condition or quality of being true, correct or exact; freedom from error or defect; precision or exactness; correctness”

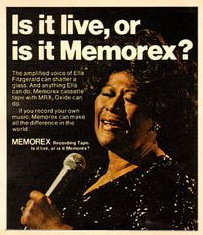

The single defining question for most audiophiles is how close their systems come to making playback sound “live”. For some, this means presenting the program material as though it were being performed in the listening room. For others, it means audibly placing the listener in the performance space in which it was recorded. And for others, it “only” requires a reasonable facsimile of a live performance of some kind, whether or not that performance is an accurate recreation of the original.

The single defining question for most audiophiles is how close their systems come to making playback sound “live”. For some, this means presenting the program material as though it were being performed in the listening room. For others, it means audibly placing the listener in the performance space in which it was recorded. And for others, it “only” requires a reasonable facsimile of a live performance of some kind, whether or not that performance is an accurate recreation of the original.

This begs the question of how audiophiles define accuracy. Perfect reproduction of a performance is obviously not achievable for many reasons, starting with the simple fact that no system is perfect. There are losses, alterations, and additions to whatever signals are being processed and transduced, in every component in the chain from source to ears (and beyond - our brains are nonlinear processors too).

So we judge accuracy by how closely playback approximates our memory or sonic concept of the original performance. For me, it’s simply not possible to judge the true accuracy of playback without knowing exactly how the recorded performance sounded. Acceptable realism is not accuracy, although it may be pleasing and enjoyable in its own right. True accuracy requires the exact reproduction of a complex set of wide ranging and diverse parameters and their interactions within the sonic envelope of multiple concurrent attacks, decays, sustain, and releases.

I’ve drawn many flames on AS and other sites for expressing the above belief. But I don’t see how one can claim accuracy without detailed knowledge of the sound of the source material. You can’t conclude that your system is accurate just because the vocalist on the recording sounds like she’s in your room with you – it’s accurate only if she sounds like she did in the master. Even the belief that she should sound like she did at the mic is flawed by the many alterations of that signal (some intentional and some inherent in the methodology &/or equipment) between the output of the mic and the master sound file. So this series of articles will explore many common parameters that are valued, discussed, argued, chased, and worshiped by audiophiles. I’ll attempt to be as objective as possible, using measurable metrics and providing demonstrations of some of what I consider to be the most important effects.

In the next piece in this series, I’ll discuss and illustrate the technical details of creating, altering, and improving sound stage and imaging. After that will come a detailed guide to how and why instruments sound the way they do. I’ll guide you to simple, open source tools and techniques, and we’ll create sound stages and ourselves. In this introductory article, we’ll shatter a few myths, gore a few sacred oxen, upset a few apple carts, and remove the veils in order to establish a firm basis for proceeding.

THE REAL SOUND OF INSTRUMENTS LIVE & RECORDED

Musical instruments and human voices have many characteristics that affect the way they sound in a given environment. Different examples of the same instrument can sound very different despite sharing the common elements that make them what they are. Some project loudly and strongly throughout a room, while others have small voices that may be equally pure and sweet but lack dynamic range. A large bore nickel-silver trumpet will sound “bigger” than a medium bore one of equal quality but made of yellow brass (70% copper, 30% zinc), played by the same person. The sound of a trumpet made of gold brass (85% copper, 15% zinc) will usually be between the bright yellow brass and the richly sonorous nickel silver horn. And the sound of my Getzen Eterna large bore trumpet with sterling silver bell is both rich and brilliant, with amazing projection that gives its sound consistency throughout most rooms and fairly low sensitivity to how it’s mic’ed.

One of the clearest differentiators of a Fazioli piano from a Steinway is its responsiveness to touch and resultant dynamic range. It will play clearly at a very low level with the lightest touch, yet it plays more loudly than most other grand pianos when played with a heavy hand. So the same piano sounds delicate on Debussy but roars with Rachmaninoff.

There are Hauser classical guitars that are very similar in appearance, dimensions, and basic construction to those made by Manuel Velazquez. Both are world class instruments, but they sound distinctly different from each other (so much so that artists will embrace one and refuse to play the other, although I wouldn’t kick either one off my own guitar rack). To my ear, Hausers are generally a bit brighter and project better in the upper mids and highs, while Velazquez guitars of similar specification are a bit warmer and fuller.

The same distinctions define every kind of instrument. The sounds of Monette, Schilke, Bach, and other top level trumpets can be very different, with a special nod to the Monette (which is Wynton Marsalis’ instrument of choice). They sound different when played by different players, and some are versatile enough to let a player alter his or her sound purely with technique. They also record differently. A smaller trumpet bell makes the sound generally less full, and it’s measurably quieter for the same physical input. But the same instrument with a smaller bell cuts through a band or a mix better than it does with a bigger bell, because it has a focused midrange spectrum that projects so well.

If you don’t hear these and a thousand similar distinctions we’ll discuss and illustrate in the next article in this series,, you’re missing out on a major part of music. Whether you’re not hearing all this is because your system isn’t reproducing it accurately or because you haven’t been listening for it is an individual determination – and I’m not getting involved. But I’ve found that many audiophiles who are not musicians either don’t hear or misinterpret a lot of these important subtleties. This is most unfortunate, because it’s part of what makes music so exciting. Instruments themselves will be the subject of the next installment in this series. But for now, let’s go a bit deeper into how instrumental characteristics affect sound stage and discuss how closely what you hear (and “see”) from your system approximates the performance itself.

FREQUENCY-DEPENDENT PROPAGATION OF SOUND FROM INSTRUMENTS

Different instruments radiate different parts of their frequency spectrum in different patterns, which affects how their sounds are heard at different distances, angles, etc from the player. The same trumpet or trombone sounds quite different when heard directly in front or from above (as when sitting in a balcony seat or captured through a flying mic hanging from the ceiling). Harmonics and reflected tones directly influence perceived location of the sound source in all planes, as we’ll discuss later when we look at how engineers manipulate recorded sound to create or alter a specific sound stage.

From this excellent 1999 article in Sound on Sound about mic’ing horns and reeds, here are graphic illustrations of the frequency specific radiation patterns of a generic trumpet, trombone, and clarinet:

Remember that these are 2 dimensional images of a 3 dimensional phenomenon – each of those projection sectors extends 360 degrees and is a portion of a sphere, not a circle. Individual instruments will vary somewhat because of manufacture, metallurgy, style, bore size etc. But the patterns and frequency distributions are generally similar for a given instrument. A trumpet that projects a brilliant tone strongly sends its upper mid and upper partials along the blue and green paths, while a warmer instrument will project a wider arc of low and lower midrange partials with a narrower component of upper mids and highs. The famous “bent” trumpet a la Dizzy has a different projection pattern (mic’ed in the bell here, which adds more brilliance):

The many implications for both live listening and recording are obvious. You can see easily, for example, how a trombone playing in its high register might sound like a trumpet if mic’ed directly on axis with a directional microphone. I strongly recommend reading the linked article completely – it’s fascinating and a strong contributor to this discussion.

There are also many instrumental variants that sound different from the commonly played versions. The straight tenor sax (below left) has a distinctly different sound from a standard curved tenor (below right). More importantly, it projects differently and has a totally different dimension in the sound stage.

The cornet (below left) and the trumpet (below right) look and sound different from each other. They have the same pitch, range, and tubing length (5.4’). But the cornet bore tapers out over the half that leads to the bell, while the trumpet’s bore is cylindrical for a full 2/3 of its length – and a traditional cornet mouthpiece has a deep, funnel-like cup that gives a full, mellow sound while a trumpet mouthpiece has a shallow cup shape yielding a brighter and more directional sound. In addition to affecting the sound itself, these differences also affect how the instrument projects in live performance and how it records. The best mic technique may be different from that for a trumpet in the same setting.

Some trumpets (e.g. the Olds Super) are more directional in projection than others, which really affects how it records with different microphone techniques, as well as how it sounds live in different rooms and at different seating locations in the same room. This also affects how “big” and “wide” it seems to be, both live and when recorded. So the instruments themselves greatly affect what we think we hear, and the best recording engineers consider this and a whole lot more. Moving right along…...

“SOUND STAGE” AND “IMAGING”

For many audiophiles, the first audible impression of most recorded music comes from the arrangement of performers that we “see” (or think we see) in the listening space. A solo instrumentalist may seem to be sitting dead center between stereo speakers or at any position between / above / behind / lateral to either one. Each member of a small ensemble may appear to be located with almost pinpoint accuracy in relation to the others and the perceived lateral limits of the space in which reproduction is presented – and that space may be delimited by the speakers or may extend outside the physical space between them. The trumpets may all seem to be coming from slightly right of center, or they may seem to be spread across the entire stage. This is most often engineered rather than natural, for a variety of reasons we’ll discuss soon.

There have always been generally accepted and followed seating charts for musical groups of all kinds and sizes. Similar instruments are usually grouped and seated in sections and are subdivided by the part(s) they play. There may be multiple violin parts, for example, each played by one section (known as a “chair” – more later). In a modern symphony orchestra, the “first chair” violins are all playing the same part in unison and sit together at the left front of the stage or other performance area.

Band or orchestra sections may seem to be playing from their “standard” locations in whatever style applies. Individual instruments playing a solo part may emerge from the same space as their sections, or they may appear in a separate and distinct location (which may or may not reflect the actual performance as it was recorded). It’s not uncommon for players in a “big band” (which is traditionally 17 pieces) to leave their seats and come to the front of the stage to play their solos. But many simply stand in place or even remain seated and are heard because they’re individually mic’ed and/or only the rhythm section is playing while they solo.

We’ll discuss the actual placement of both instruments and instrumental sections in different kinds of orchestras and bands (e.g. classical, baroque, modern, romantic, chamber, 17 piece jazz, etc) in a brief while. Each instrument (including the human voice) also has an apparent size within the sonic image created in reproduction. The apparent size, location, and projection of an instrument in a reproduced performance interact to create a sonic image of it that may or may not duplicate its true nature. Let’s explore and examine the interaction between what we hear and what we “see” in a reproduced musical performance. Then we’ll compare what we think we’ve heard to what was actually recorded.

“NOTHING UP MY SLEEVE”, SAID THE RECORDING ENGINEER

Let’s start with a discussion of what we think we hear, what it looks like in our mind’s eye, and how it got where it is. Some recordings reproduce the actual performance very closely, with no processing or other manipulation of the recorded image. But far more deliver an engineered image that’s more precise and detailed than it ever was in performance. We’ll look at some classical music examples later, but the first illustration here is from the jazz world.

It will help you in following and absorbing this discussion to look at and listen to these phenomena as I describe them. Interestingly, despite its shortcomings, YouTube’s better files present information about sound stage and imaging pretty well and consistently, so I’m using them for simplicity to illustrate and compare these points. I have a lot of the original vinyl and Redbook CDs, and they do differ some from later digital reissues and the remasterings and remixes on the ‘Tube. But the YouTube files I’ve chosen illustrate the examples very well. Please don’t criticize the sound quality – focus on the spatial relations for purposes of this article.

RECORDED ON A CONCERT STAGE

Let’s start with this video of “The Great Guitars” (Herb Ellis, Barney Kessel, and Charlie Byrd). Here’s a still of the stage during the recording of the Great Guitars concert, which was recorded live at the 1982 North Sea Jazz Festival. Watch the band as you listen to the video, comparing sight & sound.

When you listen to the recording, each of the players is precisely and perfectly where you think he “should be” based on the above image. Charlie Byrd’s playing that Ovation on our far left, Herb Ellis is in the middle, and Barney Kessel’s at the far right. Further, Joe Byrd (Charlie’s brother) is playing his bass directly in front of his amplifier (also separately mic’ed) slightly behind Charlie and closer to stage center. Notice that each guitar is amplified and each amplifier has one microphone in front of it.

As far as I can tell, Byrd’s guitar, although a nylon stringed acoustic, is not mic’ed at all. There’s a simple pickup in its bridge that’s plugged into a Peavey combo amplifier (an amp that’s as common and ordinary as his Ovation guitar but, unlike that guitar, is used mostly in rock, jazz, pop, jazz and country music). Few if any nylon stringed guitar players use this kind of amplifier, and there appears to be a single mic on the floor in front of it just as is done for Ellis and Kessel. He apparently used the Ovation and combo amp rather than any of his beloved classical guitars in order to be loud enough to be heard among his electrified colleagues. No smoke and mirrors are in evidence, though. Notice that regardless of the venue, the instrument, or the engineering, Byrd sounds just like Charlie Byrd!

Notice that Chuck (not Chuch, as is erroneously prominent in the YouTube credits) Redd’s drum kit is at the right rear with multiple mics on and around it, then listen carefully to the recording. The drums are shifted leftward in the recording and the individual drums are much further apart in the sonic image than they are in the performance. His snare and kick drums are closest to their actual stage location, although the snare is further left than the kick drum in the recording and the floor tom sounds like it’s been moved behind Joe Byrd’s bass amp.

So here you have a separate microphone on each instrument, all of which are within 5 to 8 feet of each other in a row on a large stage. If you were sitting in the center of the first row, you might have heard a somewhat similar sound stage to the recorded version, but without the pinpoint instrumental placement you hear in the recording. The amplifiers used by all three guitarists have pretty wide radiation patterns that don’t beam tightly. Those microphones directly in front of the speakers cannot capture this dimension, so the resulting mono track of each guitar lacks width and depth. The engineer can then manipulate each guitarist’s sound with EQ, delay, etc to add back a tightly controlled quasi-dimensionality.

Perhaps those in the first few rows heard some localization. But from most seats in the house, that quintet is basically one large point source, and precise localization of each member within it was not possible. The recorded sound stage was not captured – it was engineered. We’ll discuss how it’s done in a bit, and you’ll learn how to do it yourself if you follow the examples.

Pictured below is another classic example of the use of multiple microphones on a small group and the not so subtle interplay between engineering and sound stage:

This one’s the Modern Jazz Quartet performing their last concert together at the controversial Avery Fisher Hall in Lincoln Center. The acoustics there are far from ideal today, even after many changes over the years to counter the disastrous effects of politics and pandering on a design that started out with serious problems and ended up far from the original acoustician’s dreams. If you’re interested (and you should be – it’s fascinating and quire relevant to this discussion), here’s a good summary of the problems and attempted fixes. The New York Times’ review of opening night is also instructive. Between these two references, you’ll start to understand how different even the world’s most highly acclaimed performers and concert venues sound in live performance in comparison with recordings of those performances.

Here’s the concert video from which that still was taken. Listen to it carefully with your eyes closed and look for the vibes in the sound stage you “see”. Then look again at the picture of Milt Jackson’s vibes and notice that there are two mics directly over the vibraphone, which is at the far right of the stage. His instrument was moved from its physical position to center stage in mixing and mastering. I have the vinyl, which isn’t any closer to the actual layout for the concert. And it doesn’t matter, because the audience also didn’t hear them as they’re placed - the sound stage varied from section to section if not from seat to seat.

ACCURACY VS REALITY

These two are excellent examples of program material whose reproduction would be considered unsatisfactory by many audiophiles if it were completely accurate and a true image of the recorded performance as it was heard by the original audience. In these two examples (as in countless thousands of others), accurate playback would have presented little directional information on instrumental location and not a clue as to how the players were positioned. Everything from instrumental timbre to location would have been presented as heard from a specific location in the hall and would have been audibly different if that location were shifted by even a few seats in any direction. In short, most live performances do not sound like their recordings for many good reasons and many not-so-good ones.

Recording and mastering engineers create the sonic locations occupied by the performers in most recordings. In the case of the Great Guitars, our two ears were replaced by a dozen or so microphones, each positioned far from the ears of any audience member. Each guitar was captured by one mic (plus the inevitable low level bleed into the others’ microphones, which is hardly true ambiance). The multiple sound sources were then combined and manipulated to “assign” locations to each player. For the MJQ, the only thing you need to see is those two mic hanging over the vibes to realize that our ears would have heard the instrument quite differently from those mics.

I’ll finish this section with an image that’s astounding to me – here’s Milt Jackson at another MJQ concert with 3 mics over the vibes (and at least one drum mic close enough to grab some bleed)! We mere humans seem to hear vibes just fine with one pair of ears that are close enough together to serve our brains as a stereo pair of directional mics. One wonders what 3 mics, each less than 2 feet apart and less than 2 feet above a set of vibes, offers over a single mic if the goal is a realistic capture of the performance. You can make those vibes sound as wide as your listening room if you pan the end mics full left and right – but that’s not what they sound like in real life. That SOS article on recording horn sections reinforces this concept: “the best section sound is achieved with relatively distant miking, say, a couple of meters, either with a single mic aimed to provide even coverage of all players, or with some form of stereo pair. In the latter case, the precise mic placement will depend on the size of the section and the kind of stereo spread required, but would typically be three metres in front and a metre or so above the instruments”. It doesn’t take 3 mics to record a vibraphone, especially in a group.

SOUND STAGE VS IMAGING

Two features of playback that seem very closely related to perceived accuracy and realism of reproduction are sound stage and imaging. We all use the terms as though everyone knows exactly what they mean. But I’ve been unable to find anything close to a universal or standard definition of either one, and there’s clearly no unanimity of thought about them. A site search of AS for the key word “soundstage” brought 200 pages of 25 hits each, for a total of about 5000 posts in hundreds of threads – so the topic is clearly of interest to audiophiles. Let’s explore it a bit.

Here’s an interesting definition (from Rtings.com) that distinguishes between sound stage and imaging:

“Sound stage determines the space and environment of sound...it determines the perceived location and size of the sound field itself, whereas imaging determines the location and size of the objects within the sound field. In other words, sound stage is the localization and spatial cues not inherent to the audio content (music)...This differs from imaging, which is the localization and spatial cues inherent to the audio content.”

As you might expect, the experts don’t agree – there’s a sizable contingent of knowledgeable and experienced audiophiles (both lay and in the industry) who believe that sound stage and imaging are synonymous. Audioengine, for example, says this about sound stage and imaging on its website:

“In the world of audiophiles, sound stage (or speaker image) is an imaginary three-dimensional space created by the high-fidelity reproduction of sound in a stereo speaker system; in other words, the sound stage allows the listener to hear the location of instruments when listening to a given piece of music.”

Based on the common use of parametric EQ and selective delay to alter image width and height, which we’ll explore in a little while, I submit that Audioengine’s definition is closer to reality but also a bit off the mark. The listener hears the locations of instruments that the engineer wants him or her to hear – but they’re most often nowhere near their actual locations relative to each other when the recording was made. In this article, we’ll focus on localization and spatial cues in audio playback. I’ll try to separate those that are “inherent to audio content” from those that are not, recognizing that it’s not always possible. Many such cues present in a recording are subject to major influence and alteration by the playback environment and equipment. So I’ll try to isolate those that we can change from those that we can’t.

IS THERE REALLY A SOUND STAGE?

The main focus of this article is reconciliation of the spatial image we “see” when listening to music reproduction with the original spatial relations among the performers, their instruments, and the venue in which the performance was recorded. “Sound stage” is a perfectly fine term for this, although I’m happy to interchange whatever alternatives the reader may prefer. The principle is simple: when we listen to playback of a recorded performance, we not only hear the instruments (which includes voices, since the larynx was the original instrument), but we also hear the interactions among them and with their acoustic environment – and we often think we can see where they are within the space created by the interaction between our systems and our minds.

WHERE’S WALDO?

Here’s a little test for you. I’ve mixed three mono tracks into a series of stereo masters in which the instruments are located in different places on the sound stage. This is purely a demonstration of the fact that the performance you “see” and hear from your speakers need not (and usually does not) reflect a real performance in any way. I’m playing all the instruments. Do not criticize the playing or the choice of music – these are both irrelevant to this demo and chosen purely to let me lay down the tracks and complete the mixing quickly with minimal errors. Two of the files are stereo mixes with the instruments moved across the stage. The 3rd is an engineered stereo mix with tricks I’ll demonstrate and show you how to do yourself in the next article. And the 4th is the original mono mix. The linked files are in random order - you should be able to tell the difference and place the instruments visually as I did electronically. Try it -

As I hope you now understand, trying to pinpoint the locations of the instruments in a musical ensemble from listening to a recording can be fun and fascinating – but it’s most often a fool’s errand. It’s also somewhere between difficult and impossible to do from recordings that weren’t engineered to provide precise localization of individually miked instruments / sections and to match them electronically to the actual locations of the performers. We’re about to get to how this is done. I’ll offer some ways to demonstrate it to yourself and to learn more about what works and how and why it does. But with very few exceptions, recordings that deliver precise, frequency-independent localization of all the instruments are manipulated from multiple mics and do not sound like the original performance. Here’s how you know this.

THE EVOLUTION OF ORCHESTRAL SEATING (HERE’S A GOOD ARTICLE IF INTERESTED)

There are standard seating arrangements for many kinds of musical units and ensembles. And there are historical reasons for most of them, with a strong focus on composers and conductors. Let’s start with the latter, because the advent of a conductor (the first and only non-playing musician in an orchestra) changed forever the way orchestral music is composed, played, heard, and understood. Before about 1800, orchestras were small and generally guided (rather than conducted) by either the composer of the work being played or by the concertmaster (the leader of the 1st violin section).

As composers began expanding the number of parts in their works, orchestras added the necessary instruments – and the size of the average classical orchestra grew from twenty or less in the 18th century to a hundred+ by the end of the 19th century. With this expansion, composers lost the luxury of being able to position instruments where they wanted them – rearranging 100 players for each work on the program was simply not practical. Further, large ensembles needed a conductor to keep them together and guide the players and sections to the balance and expression intended by the composer and interpreted by the conductor.

A modern symphony orchestra is most often staged in a standard fashion. The back row includes percussion on the left, although the tympani are often to the far right of the back row. The big horns, e.g. trombones, French horn, tuba etc, are usually in the center portion of the back row. The next row is home to the horns, clarinets, bassoons and trumpets, and in front of this flutes and oboes. The violins (both first and second desks) sit to the left of the conductor, with violas placed in front of the conductor and slightly to his or her the right. Further to the right are the cellos, with doubles basses at the far right. Keyboards (which are not part of the basic orchestra and are added for compositions that require them) are usually on the left at least midway back, unless the composition being played features the piano (e.g. symphony for piano and orchestra, a piano sonata, or a concerto for single instrument with piano accompaniment). If the piano is a featured instrument, it will be moved to the front of the stage. Here’s a “standard” chart for today’s generic symphonic orchestra:

Compare this with a typical chart for a Baroque orchestra (14 pieces, in this example):

Orchestras of the Romantic period (roughly 1830 to 1900) varied greatly in size, composition, placement, etc as the music became more complex and sophisticated. Up to the end of the 19th century, composers often placed the two violin desks on either side of the conductor, to highlight the “call and response” approach to compositions of the time in which the melody parts would switch between 1st and 2nd violin sections and the sonic focus would leap across the stage from 1st to 2nd violins and back again like an early stereo demo record. Basses and cellos were often placed where the 2nd violins are usually seated today, with brass on the left, percussion on the right, and tympani centered between them. The horns were also in the middle, in front of the trumpets.

Look at this picture of Boston Baroque in the Sanders Theater at Harvard playing Bach, who was an innovator at contrapuntal invention and wrote parts that bounce between 1st and 2nd violins:

Now listen to the concert HERE. Laterality is clear, and individual instruments are “visible” on the sonic stage when they’re playing solo, although they’re not point sources – their sounds can be localized to their general position on left, center or right with some width and bloom. Sections are also localizable to their sides of the stage, and in a good recording played over good equipment, each section sounds like multiple instruments playing together rather than one giant one. Even in this average quality video sound track, the violin sections sound like groups of instruments playing in unison rather than a pair of giant violins.

These are some of the hallmarks of simple microphone technique in a hall with favorable acoustics for accurate capture of the program. Thanks to the acoustics of the setting and instruments of sufficient quality to project well while sounding wonderful, the microphones “hear” pretty much what we hear. This is especially critical for music in which subtle differences are essential to deliver the composer’s intent. For example, the 1st violins are seated with their highest strings toward the audience while the 2nd violins are seated with their lowest strings toward the audience. As the tops of the finest Cremonese violins were carved differently on each side (presumably to bring out the best tone and projection from strings of different tension and pitch – see THIS ARTICLE and THIS STUDY for detail, if interested), this projects a different sound to the audience when playing the same music from side to side.

Interestingly, modern carved string instruments are most often made with symmetric top plates, which both compromises their tone qualities and projection compared to the asymmetric instruments of Stradivarius et al and reduces the variance when heard from either side. But, as you can learn from the two links above, the great instruments were made differently despite the myths that they were not.

Microphone placement is critical to capture these subtle distinctions, and accuracy in reproduction is essential to deliver it in playback. Listen to the finale in this recording of Mozart’s Jupiter Symphony (from the 2012 Mozart Festival at the Opera House in Coruña, Spain) to hear a wonderful and classic example of this counterpoint from the left (first) and right (second) sections. I don’t hear a difference in violin tone from side to side, but that may be as much from the use of YouTube video sound tracks as from its absence in the hall &/or on the recording.

Stokowski revolutionized orchestral seating in the 1920s by moving the players around the stage to locations that he believed brought out the best from the orchestra on any given piece. He once moved the violins behind the horns, which outraged the Board to the point at which they threatened to fire him. But he later arranged the strings in descending order left to right from high to low (violin to bass) across the stage, which (as you can see from the “modern standard” chart above) defined the modern orchestra’s seating chart.

Although the audience cannot often localize individual instruments among their sections in the performance except during solos, general positioning of sections is clearly discernible in halls with decent acoustics and on well made recordings in those venues. Keep in mind that many halls have obstructions (pillars, half walls, etc) that may distort or reduce sonic perspective from some seating positions, along with uncontrolled reflections that can grossly alter the apparent locations of the instruments. This has always been a criticism of Avery Fisher Hall in Lincoln Center, for example, as discussed in the next paragraph.

ACOUSTIC QUALITY OF CONCERT HALLS

I strongly recommend reading the 2016 article by Leo Beranek called Concert hall acoustics: Recent findings (J Acoustical Soc Am139, 1548). If you don’t know who Beranek was, you should. He was a founder of the firm Bolt, Beranek, and Newman – that’s the firm that designed the concert halls at Tanglewood and Lincoln Center, and did the acoustics for the United Nations. If you become more interested, he wrote many wonderful books that offer great insight into what we enjoy at concerts, how, and why.

One of his major interests was the ranking of the world’s concert halls (see this article for an interesting use of his database). His book, Concert Halls and Opera Houses, contained his descriptions, photographs, drawings, and architectural details of 100 existing halls in 31 countries. He would have fit right in at AS because he combined the subjective data of the ranking with objective data in order to investigate the relationship between the geometrical and acoustical properties of halls, and their ranking. He spent a lot of time running correlation analyses among the subjective and objective data he collected, in order to understand why great halls made the music sound so wonderful and how he could improve on them in new designs and buildings.

The first question any lover of classical music usually asks an acoustician is, "Which are the best halls in the world?" - and this begins with how well, accurately, and enjoyably performances can be heard from each and every seat. The response is always surprising to the unknowing: the three halls rated highest by world-praised conductors and music critics of the largest newspapers were built in 1870, 1888, and 1900. The Musikverein in Vienna opened in 1870 and is still held by most to be the best (acoustically) in the world. Beranek attributed its superiority to “... its rectangular shape, its relatively small size, its high ceiling with resulting long reverberation time, the irregular interior surfaces, and the plaster interior”. These are characteristics that facilitate consistent presentation to all seats.

Second is Boston’s Symphony Hall, another shoe box that opened in 1900. And third is the Concertgebouw, which opened in Amsterdam in 1888 and is also rectangular in shape. Even modern halls like Tokyo’s Opera City (opened in 1998) share the same basic characteristics, from rectangular shape to irregular interior surfaces to thinly padded seats. But they all share another important characteristic – despite definite laterality and localization of sections in general, only solo instruments are sonically visible as distinct sound sources and only from positions close enough to the stage to let direct radiation predominate over reflected sound.

And then there are those radical settings and huge performances in which individual instruments are simply part of a tightly woven sonic tapestry. The Paris Philharmonie is a brand new (2014) and radically different hall whose acoustics are widely accepted as “pretty good” despite the fact that it’s a 360 degree “theater in the round” in which no two seating sections see and hear the same presentation.

Here’s a fascinating performance there with hundreds of players and singers. Localization by section is pretty good, and I don’t know how it was mic’ed – but it seems to me to capture the performance accurately without being “too accurate”. Amadeus designed and manufactured the speakers throughout, with the most amazing quote from their Director of R&D being this: “The requirements of the client when we began were practically unrealisable”! Merging did the audio systems design, as I recall, and there are many, many microphones all over the building that can be activated from master consoles. I have the acoustics design paper, and the specs are amazing. But the hall does not facilitate a consistent sound stage at all seating locations.

There are excellent live recordings out there from the vinyl era that capture most of the performance intact, e.g. some of the early Umbrella and Sheffield direct to vinyl series, many Nonesuch records, a whole lot of early classical recordings (including a lot of mono 78s that exude character and liveliness) etc. Most of the best were made with simple techniques on simple but high quality equipment in settings with acoustics that were often good but rarely great (with the exception of many symphonic pieces and a few jazz concerts that were captured in some of the world’s best halls).

CAPTURING THE SOUND STAGE

Most excellent recordings made with simple technique lack pinpoint accuracy because it wasn’t there in the performance. If the mics are on stage and directly in front of the performers, localization and separation may be overly emphasized, which can often inflate the apparent size of the instruments and voices.

I won’t go into microphone techniques, both because it’s a subject by itself and because there are active AS members and participants with a lot more experience than I have at capturing live performances and bringing them out of speakers as a coherent presentation that sounds and “looks” like it did when performed. The key element to keep in mind is that we only have a spaced pair of partially directional sensors with which to listen to music. Apart from the individual differences in auditory acuity and the ability to localize sound sources, we’re all constrained by having the same limited array of biologic microphones.

To gain some insight into the beauty of well miked, well recorded non-classical music, I strongly recommend any recording produced and/or engineered by Tom Dowd. He was a pioneer in binaural and stereo recording who made some fabulous discs for Atlantic. If you get the chance, grab the vinyl &/or the MoFi CD of MJQ’s Blues at Carnegie Hall. This 1966 masterpiece is simply a rollicking great time that was a benefit concert for the Manhattan School of Music, fortunately recorded by Dowd (with Joe Atkinson and Phil Lehle) and released by Atlantic. The original release was mono and will open your eyes and ears to the potential for perceiving multiple instruments to be on a virtual sound stage even when listening to a mono recording from one speaker system.

There are many remote recordings of music from around the world on Nonesuch (made by David Lewiston and produced by Peter Siegel) that are truly natural and excellent for assessing SQ. But it’s impossible to judge accuracy using them because so few humans have ever heard that music in performance. But it’s well worth finding and listening to some of these to gain an appreciation for true vivacity and ambiance.

I strongly recommend checking out the 17,000+ recordings made by Alan Lomax over the course of his career. Using the best tape equipment he could drag into the field, he captured folk music of all kinds from around the world between 1946 and the ‘90s with amazing fidelity. Here he is with his Ampex 601, which was state of the art at the time, in 1959:

Although we can’t readily access his tapes in any semblance of their original form (copies, remasters etc), his entire body of work has been digitized – you can read about and access it HERE. This collection is distinct from his earlier work in which he captured early blues musicians like Leadbelly on acetates and aluminum discs. That archive is not up to the SQ of the taped works, but it’s fascinating to hear and available in part from The American Folklife Center.

The most accurate recordings I have are live performances that were either made by me on my high speed Crown deck or by similarly serious others like the commercial high speed 7” reel tape release of the Tequila Mockingbird Chamber Ensemble from about 1975. But truly accurate SQ, imaging, and “feel” are not common in commercial recordings. This does not make them bad – but it makes assessing your system difficult and leads to false assumptions. What you see is often not what you get. In the next part of this series, I’ll show you how it’s done and offer some ways to do it yourself.

Recommended Comments

Create an account or sign in to comment

You need to be a member in order to leave a comment

Create an account

Sign up for a new account in our community. It's easy!

Register a new accountSign in

Already have an account? Sign in here.

Sign In Now