In this article, I independently adjust the amplitude (with digital eq) and bit-depth of a digital music file to identify at what threshold level I can start detecting a difference in sound quality compared to the original music file. In other words, how far away from bit-perfect can I detect an audible change in SQ. All music files are available for download. As a listening experience, feel free to participate to determine your own audibility threshold level. To correlate the listening tests with measurements, the differencing technique described in JRiver Mac versus JRiver Windows Sound Quality Comparison is being used.

In this article, I independently adjust the amplitude (with digital eq) and bit-depth of a digital music file to identify at what threshold level I can start detecting a difference in sound quality compared to the original music file. In other words, how far away from bit-perfect can I detect an audible change in SQ. All music files are available for download. As a listening experience, feel free to participate to determine your own audibility threshold level. To correlate the listening tests with measurements, the differencing technique described in JRiver Mac versus JRiver Windows Sound Quality Comparison is being used.

![]()

![]()

Editor's Note: This article by Mitch is somewhat technical at times. I encourage everyone to read it and ask questions if needed. This is an educational article in which Mitch uses his own ears to determine how far from bit perfect audio must be for him to notice an audible difference. The article is not about the equipment used and isn't a treatise on audibility of straying from bit perfection. Please read carefully and don't read more into the conclusions than Mitch states himself. - Chris Connaker

![]()

![]()

Establishing a Bit-perfect Baseline

First I need to establish if my computer audio system can playback music that is bit-perfect. Wikipedia’s definition of bit-perfect is, “the digital output from the computer sound card is the same as the digital output from the stored audio file. Unaltered pass-through. The data stream will remain pure and untouched and be fed directly without altering it. Bit-perfect audio is often desired by audiophiles.”

A simple way to test for bit-perfect is to record the digital output of a software music player, then compare the recorded output to the original music file stored on the hard disk to analyze if the recorded data stream has been altered in anyway.

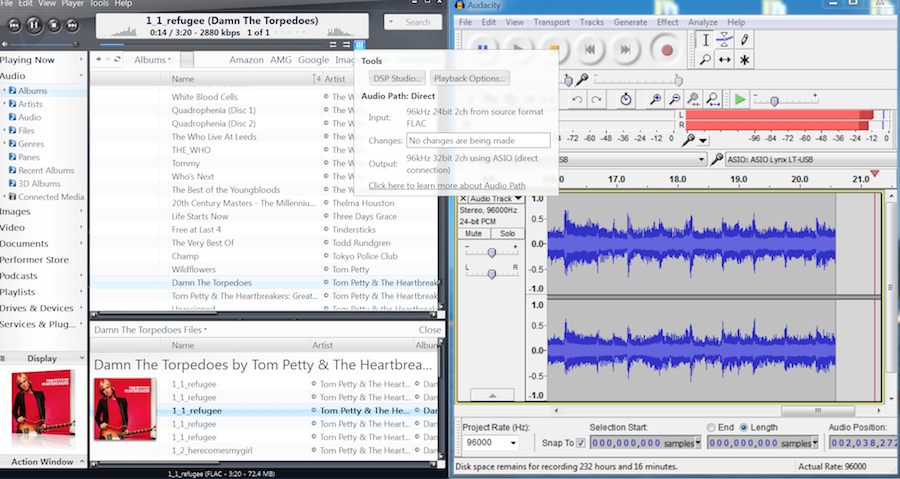

My Lynx Hilo’s USB audio driver supports multi-client ASIO applications. That means I can play a song in a music player application while recording its data stream in another application (e.g. Audacity ASIO). Sometimes referred to as digital loopback. If your digital audio system has this capability, then by following the same procedures as outlined here, you can precisely determine your own audibility threshold level. If not, you can still download the music files and participate in the audibility test as these will likely put you in the range. What you gain by running your own tests is the precision to determine your own audibility threshold level using your own gear.

In the screenshot below, Tom Petty’s 24/96 Refugee (provenance PDF) is playing in JRiver MC18 and recording the bit-stream in Audacity at 24/96. The music player is configured for bit-perfect playback:

Once recorded, the file is edited to a defined start point and total number of samples. This digital audio editing process is described in detail in the article: JRiver Mac versus JRiver Windows Sound Quality Comparison. The important part is that each test recording starts on the same sample and has the same number of samples. There are no gain adjustments.

The summary steps I follow are: opening the recording in Audacity, zoom way in on a transient peak near the start of the recording. Zoomed in enough to see individual samples and pick a sample point that I can identify its location (by the shape of surrounding samples – take a screen shot) every time a new recording is made, and make that the start point. Making this a repeatable process is not as easy as it seems and the test is invalid if off by one sample. However, after a number of tries one can get the hang of it. Delete all samples to the left of the start point. Then enter in 5,760,000 samples (picked arbitrarily from previous tests to remain consistent) in the end samples textbox. Delete all samples to the right of the cursor location. Export as 24 bit Wav.

Every subsequent test recording in this article (and previous article) is edited exactly this way and provides about 60 seconds of music or 5,760,000 samples x 24 bit depth = 138,240,000 bits of data. Long enough to listen to and form an opinion. The listening can be performed casually or load the samples directly (no editing required) into an ABX comparator or whatever is your preferred listening method.

This also makes it easy to compare recordings (i.e. difference testing) as no gain or sample alignment are required which reduces potential test errors. Just load the original track and comparison track into a digital audio editor program of your choice, invert one of the tracks, sum them together, and you have the difference track that can be saved, listened to, and analyzed in a digital audio analysis application.

Now that I have recorded the digital output (i.e. data stream) of JRiver MC18 , I can compare the recording to the original file stored on the hard drive. I edited the original music file the same as the recording with respect to start sample and total number of samples. If bit-identical, there will be no output in the difference file. If there is no output, there is nothing to hear.

Here is the recording and original file loaded in Audacity, inverting one of the stereo tracks, selecting both tracks, and plot frequency spectrum:

No difference output at all at the -144 dB theoretical limit of 24 bit digital audio. This means that the recorded bit-stream from JRiver is bit-identical compared to the original music file that is stored on the computer’s hard drive. As an aside, the original music file format is a compressed Flac. I used JRiver’s convert format utility to convert from Flac to Wav. Also bit-perfect.

Just for fun, I checked the digital output from other software music players such as Foobar and JPLAY (including JPLAY drivers for both JRiver and Foobar, plus JPLAY’s beach and river engines), all tested bit-perfect. Further, I compared FLAC versus WAV lossless file formats, also bit-perfect.

Now that I have established a bit-perfect baseline, how far away from bit-perfect can I detect an audible difference? Let’s start with amplitude audibility testing first.

Note, if you are following along with the downloaded files, adjust the volume for your typical listening level and remember the setting. The idea here is to not only compare the files, but through the course of the listening tests, the difference levels will change, reducing to inaudible. It is educational to listen to the difference files over the course of the test using the same monitor level throughout.

This approach helps put into perspective how we perceive relative loudness. The only way to achieve this is to set the volume control once and leave it there. However, occasionally we will need to increase the volume to maximum to find the noise floor. Be sure to mark the original level so that it can be returned to.

Amplitude Audibility Testing

This listening test is designed to vary the amount of digital eq (i.e. inserting a filter) until I can no longer hear a difference compared to the original music file. Then I can measure how far away from bit-perfect the eq’d file is. But most importantly, listen to the difference file which contains the absolute difference between the eq’d file and original file.

For the digital eq, I am using SonEQ, which is a free VST plugin, one of over seven thousand digital audio plugins available at KVRAudio.

Let’s start out at a level that our ears/brain should hear an audible difference. Using JRiver MC18, DSP engine, I plugin SonEQ and control its amplitude, center frequency, and bandwidth (or Q).

Looking at the MID frequency control, I added 4 dB of gain at 2 kHz with a wide bandwidth. Our ears' response is the most sensitive in the 2 kHz to 5 kHz range and I wanted to give our ears the best opportunity to hear any differences. All other settings are turned off or set to 0.

Following the differencing test procedure, where I am comparing the eq’d recording versus the original file (inverted), here is the difference file that can be listened to.

4dB difference.wav (Note all files are 33MB in size.)

Remember, this is the absolute difference between the original Tom Petty Refugee file on disk and the +4 dB digitally equalized file that was played back in JRiver and recorded in Audacity.

Now that we know what the difference sounds like, here is the original file and the +4 dB recorded file, in no particular order. I have labeled them A and B without giving away which file is which.

Even causally listening, I can hear the difference between the two files. Adding the files to Foobar’s ABX Comparator, I was able to identify 10/10 times the eq’d file compared to the original file. I would expect that the majority of folks participating in the listening test can hear the difference.

From a measurement perspective, here is the frequency analysis of the difference file:

The difference of what was added by the digital eq is approximately a -40 dB (at its peak) signal.

Let’s dial back the eq to 1 dB boost instead of 4 db.

Here is the difference file:

I can still hear it at regular listening volume, but definitely down in level compared to the 4dB difference file.

Here are the original and 1 dB boosted files (in no order):

Can you hear a difference? I found this one harder to identify a difference, either casually or ABX comparison. I would say this is close to my audibility threshold of being able to hear a difference.

Here is the frequency analysis of the difference file:

Now the difference is approximately -50 dB, which is a difference of 10 dB compared to the 4 dB recorded file that we just listened to. 10 dB of difference relative to how our ears perceive loudness equals twice as soft in comparison. See Information about Hearing, Communication, and Understanding for reference.

Ok, now let’s digitally eq the original file with 0.1 dB gain. Here is the difference file:

I can hear it at the regular listening volume, but from a perceived loudness level, it is much softer.

Here are the original recording and 0.1 dB gain files:

Can you hear a difference? I cannot. It is educational to go back to the difference file and listen again. That’s quite an audible level difference between the difference file and program level music files.

Here is the frequency analysis of the difference file:

The difference file level is about -70 dB. With respect to how our ears hear this from a perceived loudness perspective, relative to the -40 dB file (with 4dB of eq), it is 8 times softer. Relative to the -50 dB file (with 1 dB of eq), it is 4 times softer. However, this is nowhere close to bit-perfect (i.e. -144 dB). Further, our ears response is logarithmic. So for every 10 dB of change is perceived by our ears as a doubling or halving of perceived loudness. So a difference of 70 dB is 128 times as loud or soft. That’s why the our ability to detect audible changes falls off (the cliff) rapidly once we near our audibility threshold as our ears hear logarithmically, not linearly, both in amplitude and frequency. By listening to the difference files, at the same monitoring level, gives you a sense of how quick it goes from audible to nothing. Listening to the difference files in the next test at the same volume will also give you sense of what logarithmic means from a perceived loudness perspective.

Bit-depth Audibility Testing

In JRiver’s DSP engine is a bit-depth simulator in which bit-depth can be varied, one bit at a time:

Again, let’s start with something that should be audible to the majority of listeners. I am going to reduce the bit-depth to 8 bits:

I also turned dither off to give us the worst case possible. The “rule-of-thumb” relationship between bit-depth and S/N is, for each 1-bit increase in bit depth, the S/N will increase by 6 dB. 24-bit digital audio has a theoretical maximum S/N of 144 dB. Therefore, 8 bit resolution will have a dynamic range of 48 dB. See Audio bit-depth for reference.

I recorded the output with the reduced bit-depth (8 bits) and created a difference file. Remember, try listening to this file at your regular volume and keep the volume the same for the remainder of the listening tests.

It is an ear opening experience to listen the difference file. This is what to listen for when comparing (casually or ABX) the original file with the reduced bit-depth recording.

Again, I could pick this out 10/10 times in an ABX test.

Rather than looking at the frequency analysis, the meter level on the difference file in Audacity will validate whether I have 48 dB of dynamic range:

Sure enough, right on -48 dB.

How about 10 bit resolution?

10bit difference.wav Getting quieter.

And the two files, one recorded at 10 bit resolution and the other is the original file:

Can you hear a difference? I found it harder to tell a difference unless I cranked the headphone volume up and ABX’d during the softest passage of the song, which is short.

The difference file of 10 bit resolution x 6 dB per bit should measure -60 dB on the meters:

Sure enough.

How about 12 bit resolution?

Listening to this at regular volume is way down in level and hard to imagine how one would hear this noise level over regular program level:

At 12 bit resolution we should be getting a dynamic range of 72 dB:

I found I could not tell the difference between the music files, either casually or ABX. Interestingly, the dynamic range of the 60 second snippet of the original music file is 12:

To be fair, given that this is a rock song, there are few quiet passages which make it difficult to hear the noise floor as it is being masked by the music. Given an orchestral piece with a wider dynamic range and turning up the headphone volume, (and if I had a quieter listening environment), it may be possible to hear a difference, even up to 13 or 14 bits.

Speaking of 14 bits:

This is considerably down in level as perceived by my ears. At regular listening level, I can’t hear the noise at all. I have to turn the volume up near maximum to hear the noise.

Can you hear a difference? I cannot.

Again the meters read the correct level (i.e. 14 bits x 6 dB per bit):

For fun, I tried a bit-depth of 20 bits.

If I turn my headphones up to maximum listening to the difference file, I can’t hear anything at all. The 20 bits file is 60 dB quieter than the 10 bits file. From a loudness perspective, 60 dB of change is 64 times softer. Over the cliff we go. Therefore it is not physically possible for me to hear a difference when comparing the two program level files.

No surprise as 20 bits equates to -120 dB noise floor. Can be measured, but can’t be heard (by me).

Conclusion

I set out to determine how far away from bit-perfect I can hear an audible difference in sound quality. In the case of adjusting amplitude with a digital eq, my audibility threshold is about 1 dB of change. 4dB of eq was easy to hear, but I could not detect a .1 dB eq change. In the case of adjusting bit-depth, my audibility threshold is around 10 to 12 bits, using rock music as the sample. At 8 bits I could easily detect a change, but not at 12 bits. In both the amplitude and bit-depth test cases, it appears that my audibility threshold level is around -70 dB when comparing an altered file to the original file.

No question folks will hear differently, but likely to fall within a bell curve around the ranges mentioned above. Of course, different eq curves, music samples, gear, background noise while listening, hearing capability, age, pure randomness, etc., will also vary the results, but should not be too far off.

The music files can be downloaded and listened to, to determine one’s own audibility threshold. Or if you have access to an ADC with a driver that supports multi-client applications, the above tests can be repeated, with similar results.

When testing lossless digital audio file formats and digital audio music players and both measure bit-perfect, it means the bits have not been altered in anyway before entering the DAC as compared to the bits stored in the music file on the hard disk. If the bits have not been altered, there is nothing in the difference file. If there is nothing in the difference file, there is nothing to hear.

Having spent 10 years as a professional recording/mixing engineer during the introduction of digital audio in the 80’s and 20 years as software engineer, I think some folks may underestimate the power of digital audio software. While we are discussing 24 bit audio here, most modern digital audio software utilizes a 64 bit audio engine. The precision offered by 64 bit audio engines is billions of times greater than the best hardware can utilize. In other words, it is bit-perfect on all known hardware. That is why, if you read my article on sonic signatures, this hardware device, which virtually anyone that listens to pop/rock/blues, etc., will have heard its sonic signature, is 100% fully emulated in software with the same sonic signature. This emulating audio hardware in digital signal processing software has been going on for some time in the pro audio industry. Many in the pro audio industry concluded years ago that all Digital Audio Workstations (DAWs) sound identical when outputting bit perfect audio.

This brings me to my point on what’s next.

This brings me to my point on what’s next.

Using this binaural microphone, I am going to record my test song at the listening position. Using my ears and differencing technique, I should be able to compare what is being heard at the listening position with the original music file to see how far off it is.

It should come as little surprise that there will be a huge difference factoring in analog electronics, mechanical speakers, and room acoustics. Therefore, it is likely that very few audio playback systems are accurately reproducing what is stored in the music file.

However, using the power of 64 bit digital signal processing (DSP), as in digital room correction software, I can alter the bit-stream to more closely match the bits stored in the original music file. Why would I want to do that? For me, the most musical enjoyment comes from knowing that I am getting the most accurate representation of the music stored in the media file on disk arriving at my ears in the listening position.

Until next time, enjoy the music!

![]()

![]()

About the author

Mitch “Mitchco” Barnett

Mitch “Mitchco” Barnett

I love music and audio. I grew up with music around me as my Mom was a piano player (swing) and my Dad was an audiophile (jazz). At that time Heathkit was big and my Dad and I built several of their audio kits. Electronics was my first career and my hobby was building speakers, amps, preamps, etc., and I still DIY today ![]() . I also mixed live sound for a variety of bands, which led to an opportunity to work full-time in a 24 track recording studio. Over 10 years, I recorded, mixed, and sometimes produced

. I also mixed live sound for a variety of bands, which led to an opportunity to work full-time in a 24 track recording studio. Over 10 years, I recorded, mixed, and sometimes produced ![]() over 30 albums, 100 jingles, and several audio for video post productions in a number of recording studios in Western Canada. This was during a time when analog was going digital and I worked in the first 48 track all digital studio in Canada. Along the way, I partnered with some like-minded audiophile friends, and opened up an acoustic consulting and manufacturing company. I purchased a TEF acoustics analysis computer

over 30 albums, 100 jingles, and several audio for video post productions in a number of recording studios in Western Canada. This was during a time when analog was going digital and I worked in the first 48 track all digital studio in Canada. Along the way, I partnered with some like-minded audiophile friends, and opened up an acoustic consulting and manufacturing company. I purchased a TEF acoustics analysis computer ![]() which was a revolution in acoustic measuring as it was the first time sound could be measured in 3 dimensions. My interest in software development drove me back to University and I have been designing and developing software

which was a revolution in acoustic measuring as it was the first time sound could be measured in 3 dimensions. My interest in software development drove me back to University and I have been designing and developing software ![]() ever since.

ever since.

![]()

![]()

Recommended Comments

Create an account or sign in to comment

You need to be a member in order to leave a comment

Create an account

Sign up for a new account in our community. It's easy!

Register a new accountSign in

Already have an account? Sign in here.

Sign In Now